CleanCLI: Building a Faster, Cleaner PowerShell Experience

An open source project focused on creating a faster, cleaner, and more portable PowerShell terminal experience without unnecessary dependencies or overhead.

For most developers and sysadmins, terminal customization starts innocently.

You tweak the prompt, then add a helper function, then pull in a theme framework. Maybe install OhMyPosh/OhMyZsh or a few PSReadLine plugins. A year later your PowerShell profile has become a small ecosystem with internet dependencies, startup overhead, random helper scripts from 2010 (… I might need that random script!), and enough conditional logic to qualify as infrastructure.

Mine certainly did.

Over the years, I’ve bounced between Windows desktops, laptops, VMs, and remote environments trying to maintain a terminal experience that felt consistent without becoming fragile.

- some prompt frameworks would occasionally stall waiting on internet checks or update systems

- large git repositories (50K+ loose files) could noticeably slow prompt rendering

- non-git directories still paid the cost of git detection logic

- portability across machines became increasingly awkward

- my profile had turned into an archaeological dig of old functions and experiments

Eventually I decided to stop patching around the problem and build something intentionally simpler.

That project became CleanCLI.

What Is CleanCLI?

CleanCLI is an offline-first native PowerShell prompt and PSReadLine setup focused on responsiveness, portability, and sane defaults for day-to-day terminal work.

The goal wasn’t to recreate every feature from existing prompt ecosystems. I wanted something that felt native to PowerShell, started in under a second, handled large repositories gracefully, worked entirely offline, traveled cleanly between machines without a suitcase of dependencies, and still made sense when I came back to it six months later.

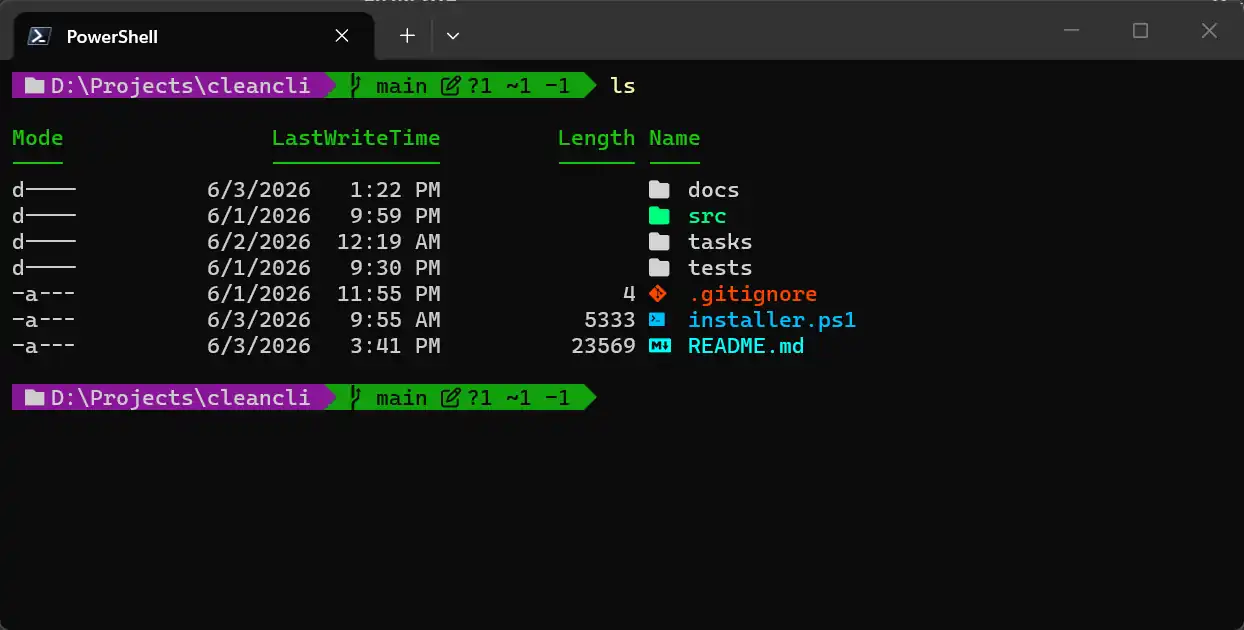

CleanCLI’s prompt: branch, git status indicators, optional right-aligned segment. Nerd font glyphs are optional.

The project leans heavily into local-first behavior. Git status operations are timeout-bounded and cached. Outside a repository, CleanCLI fails fast instead of probing endlessly for git metadata. Machine-specific configuration is layered cleanly instead of embedding endless conditional logic into a single profile script.

A surprising amount of the work ended up being about restraint.

The Performance Problem

One of the biggest motivators was prompt responsiveness in large repositories.

Modern prompt frameworks often do a lot of work every time the prompt renders:

Individually, none of those are terrible. Together, especially on Windows or in large repositories, the latency becomes noticeable.

CleanCLI takes a more defensive approach.

Outside a git repository, it returns immediately without invoking git at all. Inside repositories, it parses .git/HEAD directly for branch information and bounds expensive git status calls with timeouts and caching. If a repository is consistently slow, it automatically degrades to lighter-weight branch-only status rather than punishing every prompt render.

That sounds small until you spend all day in a terminal.

Portable Configuration Without the Chaos

Another part of the project came from wanting a terminal environment that could travel between machines without becoming a giant block of conditionals.

CleanCLI supports machine profiles layered on top of shared defaults:

That means a desktop can enable richer prompts and right-aligned status segments, while a VM or remote shell can automatically fall back to lightweight ASCII rendering and simpler git behavior.

The same profile works everywhere without turning into:

if ($env:COMPUTERNAME -eq ...)

repeated fifty times.

Native, Offline, and Self-Contained

One design choice I ended up caring about more than expected was avoiding runtime dependency chains.

CleanCLI ships with its own offline native icon mappings, prompt rendering, and configuration system. It can integrate with tools like Terminal-Icons when desired, but it doesn’t require them to function well if you have a “nerd font” for your terminal.

That matters more than it sounds.

A surprising amount of modern terminal customization assumes internet access, available package registries, downloadable themes, remote metadata, and background update systems. Those assumptions break down quickly in offline environments, remote shells, isolated systems, or simply when you want your shell to start immediately.

I wanted a setup that felt durable.

Modular Shell Behavior Instead of Profile Spaghetti

One of the architectural decisions that evolved during the project was treating shell behavior as composable modules rather than one giant PowerShell profile script.

The classic profile loaded everything on every machine whether it needed it or not. Disabling behavior meant hunting through a several-hundred-line file and commenting out sections.

CleanCLI moves toward a modular model instead. Capabilities are toggleable and composable, so a desktop gets richer prompts and enhanced listing while a VM loads only what it needs.

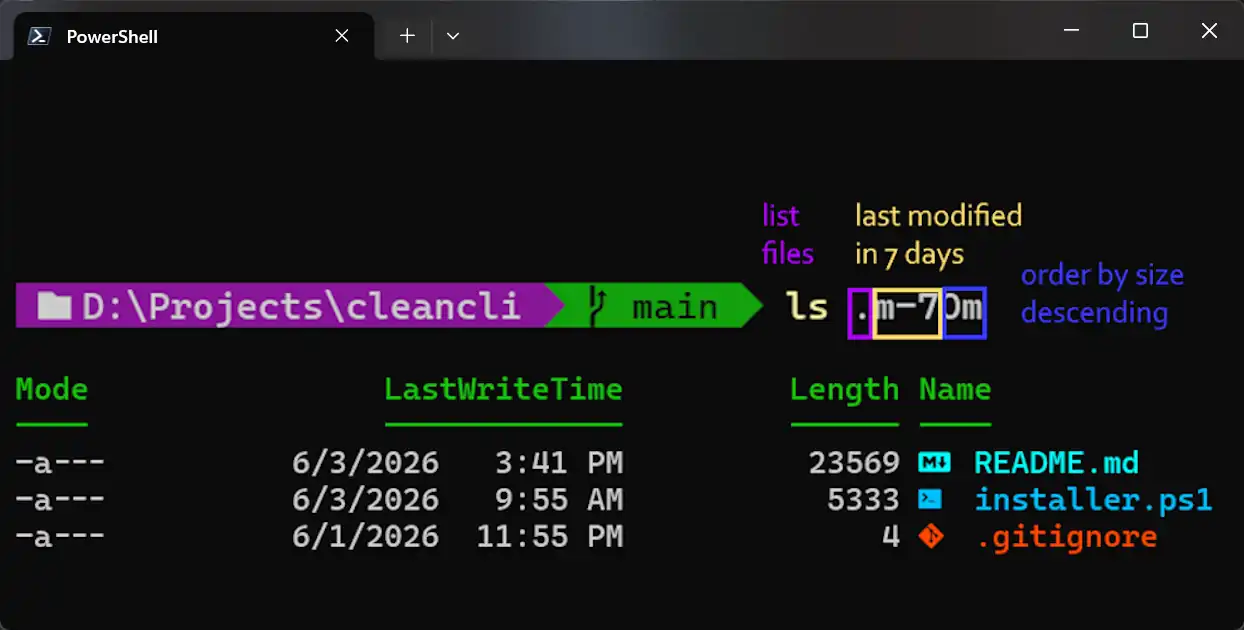

A good example is the enhanced listing (ls) system.

PowerShell’s default file navigation experience has always felt noticeably weaker than mature Unix shells, particularly once you get used to zsh-style glob qualifiers and filtering workflows. Jumping between Linux and Windows environments constantly exposes those differences.

CleanCLI adds lightweight glob-based filtering inspired by zsh without requiring external shell emulation layers or replacing PowerShell itself.

ls .m-7Om # files modified in the last 7 minutes

ls /Fsrc* # non-empty source directories

Both return native PowerShell objects, so they pipe cleanly into anything downstream.

Qualifier filtering in action: by modification time, size, type. No shell plugins required.

While the bareword qualifiers work out of the box, you can also use explicit flags for more complex queries:

ls .L+100k*.log # filter log files larger than 100KB

ls -File -LargerThan 100kb -NameLike *.log # same thing with explicit flags

The long-term goal isn’t to turn PowerShell into bash or zsh. PowerShell’s object pipeline is genuinely powerful and worth preserving.

The goal is smoother interoperability between ecosystems. If someone wants a more Unix-like experience, modules can progressively enable things like touch, familiar navigation aliases, qualifier filtering, and cross-platform command conventions, without introducing heavyweight compatibility dependencies or forcing a completely different shell runtime. Opt-in ergonomics, not shell replacement.

Have At It!

CleanCLI has been a particularly fun one to work on, sitting at the intersection of developer ergonomics, performance tuning, PowerShell internals, terminal UX, and a healthy amount of “why is this slower than it needs to be?”

If you’re interested in PowerShell workflows, prompt customization, or just want a faster terminal experience on Windows, the project is on GitHub: https://github.com/drlongnecker/cleancli

The README covers installation, machine profiles, prompt behavior, git handling, diagnostics, and some intentionally opinionated directory listing enhancements. _There is a POC installer script for