The Code Nobody Understands

AI codes faster than teams can understand creating a new technical debt.

The abstraction layer keeps moving. That’s the simplest framing of what’s happening in software development right now, and it helps explain why the usual metrics for team health aren’t keeping up.

Most developers stopped thinking about assembly code decades ago. The compiled languages we use do that work, and we’ve accepted (correctly) that not understanding register allocation is a fine trade for working at a higher level of abstraction. Later, managed languages removed memory management from the mental model. Every time an abstraction layer stabilized, the developers working above it could focus on the new problems at the new level, rather than the already-solved ones below.

In recent months, AI is doing it again. The level we’re moving toward isn’t “write code that does X”. It’s “express intent clearly enough that generated code does X.” The abstraction is real and the productivity gains are real. What’s less visible is what the gap looks like when that layer doesn’t hold–when the “vibe” fails.

When the Gap Opens

The researchers watching this most carefully have settled on a term: cognitive debt. Margaret-Anne Storey’s March 2026 paper defines it as the erosion of shared understanding across a software system–not as bugs in the code, but as gaps in what any human being on the team actually knows about what the code does and why it does it that way. Technical debt manifests as friction when you try to change the system. Cognitive debt manifests as paralysis when something breaks and nobody knows where to look.

The distinction matters for leadership because technical debt is visible through standard engineering metrics but cognitive debt isn’t. Teams can have excellent code coverage, solid deployment frequency, and a codebase that’s effectively opaque to the people maintaining it.

What makes this pattern hard to catch is that it accumulates slowly and looks like productivity. Teams ship more features. Reviews move faster because there’s less custom logic to interrogate. Then something goes wrong in a way that’s genuinely hard to trace, and the team discovers the mental model they’d been working from didn’t match what the system was actually doing.

A version of this predates AI entirely: the legacy codebase nobody wants to touch because the person who understood it left and their understanding went with them. AI-assisted development can create that condition faster, team-wide, while the velocity numbers still look healthy. And unlike the legacy system problem, which was at least legible as a risk, cognitive debt at scale is new enough that most organizations don’t have language for it yet, let alone practices for managing it.

Storey’s paper introduces a related concept worth understanding alongside cognitive debt: intent debt. The erosion of captured rationale about what a system is supposed to do and why. As AI agents increasingly touch codebases alongside human teams, the absence of explicit intent creates a different fragility. Not just “nobody understands the code” but “nobody has written down what the code is for.” Both compound each other.

There’s a bigger picture that likely needs it’s own article, but that this intent debt also accelerates due to AI being non-deterministic. The same prompt can produce different code on different runs and in different tools, but which is “right”? The rationale for why a particular piece of code exists is more likely to be lost when the code itself isn’t deterministic.

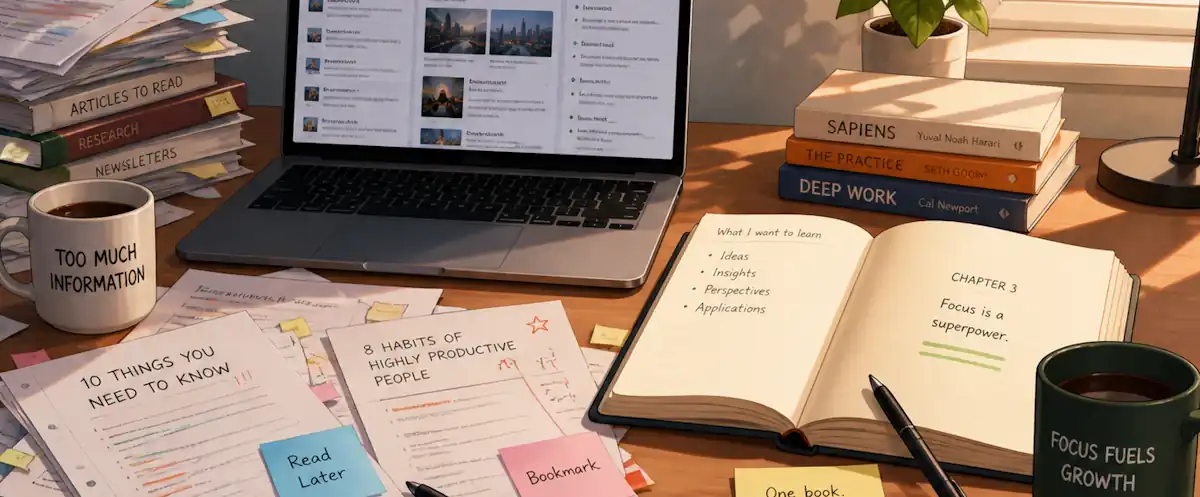

What the Learning Actually Requires

Thoughtworks Technology Radar 34, released this past week, frames the response as a return to disciplined engineering fundamentals: pair programming, spec-driven development, mutation testing, zero-trust reviews of generated code. Practices that were well-established before AI became widespread and are now less practiced precisely because AI makes them easy to skip.

The underlying problem, though, isn’t just which practices are being skipped, but that comprehension has stopped being treated as something you build deliberately. Each previous abstraction layer transition required developers to build fluency at the new level, not just above the old one. Developers who moved from assembly to C didn’t stop understanding what their programs were doing; they learned to reason at the new level of abstraction. The intent-to-generation transition asks for the same discipline, and it’s not happening by default.

Addy Osmani’s March 2026 essay on comprehension debt makes a useful distinction from the underlying research: passive delegation, or handing a problem to AI entirely, is what impairs skill formation. Active engagement with AI-generated code, asking it to explain its choices, interrogating edge cases, and pushing back on assumptions didn’t show the same comprehension gap. I fully agree that the tool isn’t the problem, but it’s the passive relationship with the tool that many developers have taken.

In practice, the difference between building comprehension and skipping it comes down to a few habits that are genuinely in tension with the pressure to ship.

- Code reviews that interrogate generated output rather than just checking correctness.

- Pairing humans with AI on complex logic rather than delegating the logic entirely.

- Treating debugging sessions without AI assist as deliberate practice in the same way a pilot maintains manual landing skills even when the autopilot is reliable.

None of this is novel. All of it requires treating comprehension as an investment rather than assuming it accrues automatically from the work. The lifelong learner framing I keep coming back to is that the abstraction layers will keep moving. The developers who stay effective across transitions aren’t the ones who mastered the current tool—they’re the ones who stayed curious about what was happening underneath it and why. That disposition is harder to hire for and harder to maintain than tool proficiency, but it’s what actually transfers.

The Second-Order Question

Senior leaders I’ve talked to lately are appropriately focused on the first-order question: are teams shipping faster? The second-order question is getting less attention: are teams still capable of understanding what they shipped?

That’s not rhetorical. It has practical consequences for how you structure reviews, where you invest in learning, and what you look for when something fails in production. Technical debt gave leaders language for a problem engineers had been managing for decades. Most organizations now have habits and metrics for tracking it. Cognitive debt is newer, and we don’t have the same institutional patterns for measuring or managing it yet.

The discovery debt post I wrote in March was about delivery accelerating faster than understanding of what to deliver. Cognitive debt is the same pattern one layer down: the code generating faster than the understanding of what the code is doing.

Both are manageable; however, both require treating comprehension as something you invest in deliberately, not something that accumulates on its own.