The Dashboard Nobody Believes

DORA metrics still look green. That doesn't mean the team understands what they shipped.

DORA metrics (deployment frequency, lead time for changes, change failure rate, mean time to recovery) were a genuine improvement over what came before. Measuring story points and PR counts felt like measuring motion rather than progress, and the DORA framework shifted attention toward delivery outcomes that actually mattered.

For most of the last decade, moving toward DORA was moving in the right direction and, on my last team, we had a lot of success communicating progress and health through those measures.

However, the assumption embedded in those metrics is one we never needed to make explicit: that writing code and understanding code happened together. When a developer wrote a function, they were building a mental model alongside the implementation. When a team shipped a feature, someone could explain how it worked and why it was designed that way. Measuring velocity was a reasonable proxy for comprehension because both were coupled to the same act.

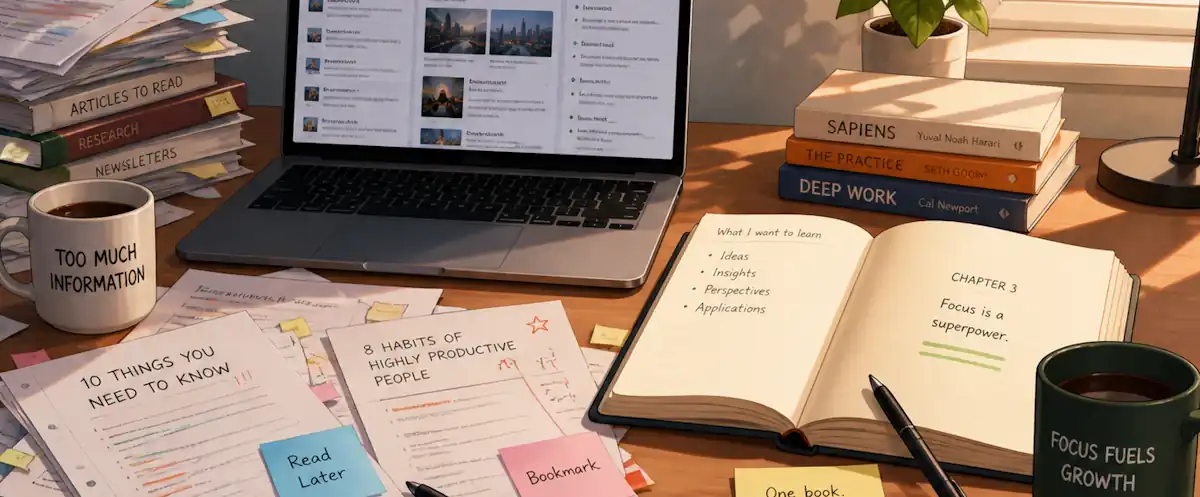

Last week I wrote about what happens when that coupling breaks: the cognitive debt that accumulates when AI generates code faster than teams can absorb it. Today I’m looking at the measurement side: our dashboards were calibrated for the coupled world, and most of us haven’t updated them for the one we’re actually working in.

What Teams Miss

Before AI assistance, there was a natural bottleneck in most engineering orgs: senior engineers could review code faster than junior engineers could produce it. This wasn’t an accident of process. It was structural. Junior engineers wrote slowly because they were figuring things out. The friction of that figuring was how knowledge transferred. Seniors could review quickly because they already had the mental model, and the review was a genuine quality gate because the production rate was bounded.

AI has quietly inverted this. A junior engineer with a capable coding agent can now produce code faster than any senior can critically audit it. As the queue builds, the reviews get shallower and merge times fall all while the dashboard records that as improvement.

PR counts climb and the dashboard calls that productivity without understanding the broken quality gate until it shows up in an incident review.

This isn’t a failure of discipline or intent. It’s an instrumentation failure. The metrics were calibrated for a world where production speed was the binding constraint. In that world, faster merges meant more throughput. Code has become cheaper to produce than to perceive.

The deeper problem is that DORA’s four measures were designed to capture delivery health, not comprehension health. A team can have a change failure rate below 5%, deploy daily, and recover from incidents in under an hour all while no one on the team can explain why a critical architectural decision was made or what would break if a specific module were touched.

What to Look For Instead

I’ve yet to see a clean replacement metric set emerge. We’re in an instrumentation gap era and these ideas are starting points, not complete systems. What follows is what seems worth tracking based on what’s emerging from the research and what I’m seeing teams actually navigate.

Bus factor as a comprehension proxy. Most engineering orgs discuss bus factor informally.

How many engineers could explain, in the moment, the load-bearing logic of a given module without documentation?

Formalizing that question, even qualitatively at the team level, gives you a comprehension signal that existing tools tend to ignore. It degrades quietly and usually becomes visible only in post-incident reviews. The teams getting ahead of this are asking the question before the incident, not after.

Verification depth over merge speed. Thoughtworks Technology Radar 34 frames the organizational shift well: high-performing engineering teams are moving from roughly 90% creation to 90% verification (acceptance criteria definition, intent capture, boundary testing) as AI handles more of the execution layer. If that’s where the skilled work is moving, measuring the quality of verification rather than the speed of merge is where the useful signal sits. Mutation testing over coverage percentage is one concrete example. Code review comments that interrogate design decisions over comment count is another.

Intent capture frequency. Storey’s research identifies intent debt, specifically the absence of documented rationale, as compounding cognitive debt in ways that become critical when AI agents start touching systems alongside humans. The practical proxy is Architectural Decision Records: not whether they exist, but how recently and consistently they’re being written. A team writing ADRs after significant architectural choices is building something both their future colleagues and their AI tooling can actually use.

None of these are as clean or quantifiable as deployment frequency. That’s part of what makes this period genuinely hard: we’re trying to measure something that was previously measured implicitly through the work itself.

The Frame That Helps

The teams I’ve seen navigate this well in the past 6-8 months share one ground rule: they treat shared understanding as a deliverable, not a byproduct. Understanding gets sprint time. Engineers are expected to explain systems they didn’t write. Intent gets documented before the AI prompt, not after. Generated code gets reviewed with the question “does the team know why this does what it does?” alongside “does it pass the tests?”

That framing matters because it changes what’s counted as “work”. Historically, documentation and knowledge transfer were the slow-down you accepted to keep new engineers productive. In an AI-assisted environment, they’re the mechanism by which the team retains the ability to safely evolve the system at all. That’s a different argument for the same practice, and it tends to land differently in planning conversations.

The measurement discipline will catch up. It usually does once practitioners figure out what they’re actually trying to protect. In the meantime, bus factor, debugging capability, and ADR frequency are imperfect proxies that are better than nothing, and considerably better than a green change failure rate that doesn’t mention whether anyone understands the system it’s measuring.

The question worth putting to your team isn’t “are our DORA metrics trending the right direction?” It’s the one the research surfaces: if your AI tools disappeared tomorrow, how much of your system would your team actually own?

That answer is more useful than the dashboard.