Our Dwindling Talent Pipeline

AI handles the entry-level work. So where do entry-level skills come from now?

The work that turned junior engineers into capable mid-level engineers was mostly work nobody else wanted to do.

I know this from experience. Thirty years ago, I started in development stitching input screens together on an AIX mainframe in C. The work was tedious, and that was precisely the point: each screen taught me how systems actually connected under the surface, and why they broke in the ways they broke. That informal apprenticeship, embedded in unglamorous work, built the foundation that everything since has rested on. I wouldn’t trade any of it.

What I recognize is that most junior developers today don’t start there, and the gap between what that work used to teach and what it teaches now is widening faster than it’s being replaced.

GitHub’s 2024 survey of developers using Copilot found that 88% reported increased productivity, with the biggest gains in exactly the tasks that used to serve as training ground: boilerplate generation, test setup, documentation. The productivity gains are real. The questions about what gets lost are also real, and most organizations aren’t asking them explicitly.

This connects directly to something I examined last month in The Code Nobody Understands: cognitive debt, where AI generates code faster than teams can understand what it’s doing. For junior engineers, the compression point is earlier. It’s not just that they understand AI-generated code less deeply; the skills enabling that understanding aren’t forming at all. When cognitive debt is already a team-wide concern, a pipeline of engineers who never developed the foundational comprehension in the first place makes the problem compounding rather than static.

The Unwritten Curriculum

The work that AI is replacing wasn’t just tedious. It had specific educational content that was inseparable from the tedium.

Writing boilerplate code teaches you what the boilerplate is doing. When an AI generates it, you get the output without the understanding. That gap is manageable for an experienced developer who can audit what was generated; it’s harder for someone still building the mental models that make auditing possible. Debugging unfamiliar code follows the same logic. It teaches navigation, hypothesis formation, and systems reasoning about code you didn’t write. When AI debugging assistance provides answers without the process, neither skill develops.

Manual testing and QA, particularly in edge cases, teaches something different: how users actually interact with a product versus how designers intend them to. That gap-awareness doesn’t come from test coverage reports. It comes from the experience of finding the weird thing a user does that nobody anticipated.

None of this is an argument against using AI tools. It’s an observation that the informal curriculum embedded in junior work was real, and it’s being disrupted faster than we’ve thought through how to replace it.

Four Years Out

The downstream effect is a lag that won’t be visible for a few years. Junior engineers hired today will be mid-level engineers in three or four years. The skills they develop now will shape the capability level of those mid-level engineers.

If we’re handing off the routine work to AI and replacing it with… what, exactly? That’s the more interesting version of the question. If AI is pushing senior developers further up the stack toward architecture and systems design, the same logic should extend downward. Junior work ought to elevate too, toward the meta-level skills that the grunt work was always developing in the background.

A junior who doesn’t know polymorphism or the Rule of Five from memory isn’t necessarily behind, as long as they know how to find those concepts and understand them well enough to catch when AI-generated code applies them wrong. LinkedIn’s 2026 Skills on the Rise report shows the fastest-growing entry-level AI skills now include LangChain, RAG pipelines, and vector database knowledge. The floor is moving.

What makes that movement urgent is the employment data. Addy Osmani’s 2026 analysis cites a Harvard study of 62 million workers that quantifies the employment effect of generative AI adoption directly.

Industry veterans describe the downstream consequence as “slow decay”–an ecosystem that stops training its replacements and creates a leadership vacuum a decade out. The question isn’t just whether today’s juniors are learning the right things. It’s whether there will be enough of them developing at all.

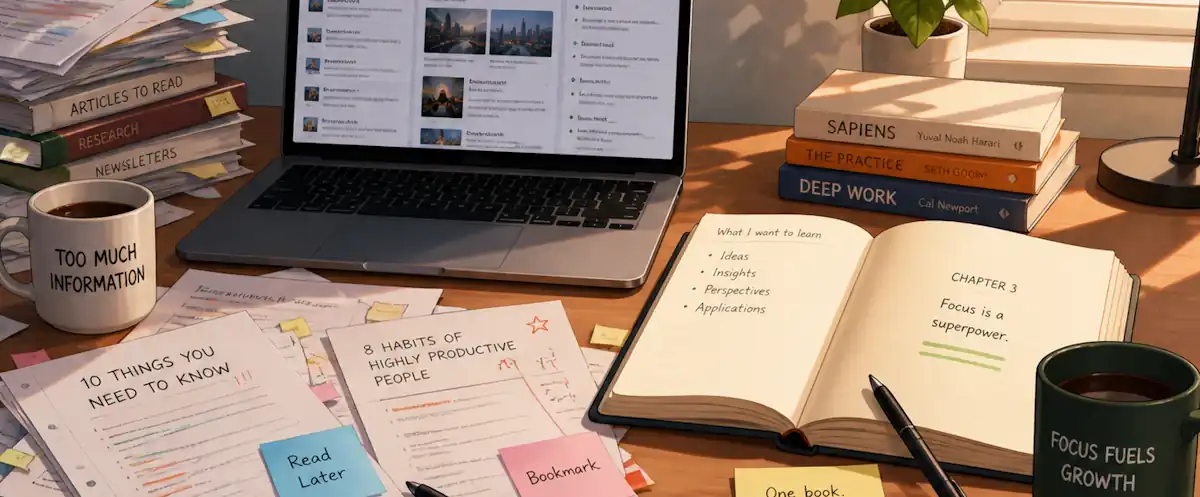

The better organizations are thinking explicitly about this: designing junior onboarding around the skills that need to develop, not around the tasks that need to get done. Code review used as a pedagogical tool rather than a quality gate. Structured pair programming with explicit knowledge were transfer goals rather than just efficient output.

The less thoughtful version is treating AI-assisted junior development as equivalent to traditional junior development because the output looks similar. The output might be similar. The learning is not.

What Sprint Reviews Miss

The practical challenge is that AI-assisted work looks good in sprint reviews regardless of where someone is in their career. Code ships, tests pass, and PRs get approved. Those signals tell you about output quality, not capability development. The more useful question is whether the engineer, at any experience level, can reason through what the AI produced and catch it when it’s wrong. Nobody has a complete playbook for developing that skill yet. The teams making progress are the ones asking it openly, not just directing it downward.

I wrote about mentorship as a leadership amplifier a year ago, focused on coaching as a complement to technical capability. What’s changed since then is the context in which that mentorship happens. It’s harder now because the senior engineer is also navigating unfamiliar territory. A coaching relationship built on “let me show you how to do this” looks different when the senior engineer is still working out how to evaluate what AI produces. The honest version of that conversation is probably “let’s figure out together how we catch when this is wrong”—which is a different kind of mentorship than either side has practiced.

The organizations managing it well create explicit space for the figuring-out at every experience level, rather than treating it as a problem specific to newer engineers.

The Honest Position

I’m genuinely uncertain about how this plays out. The AI productivity gains are real and material. Teams are shipping faster, and the work quality at the output level is often better than what junior engineers would have produced without assistance.

What I’m less certain about is whether we’re trading short-term output quality for long-term capability depth. The senior engineers who are excellent now are excellent partly because they went through “the hard times”. If the next generation of senior engineers never went through equivalent challenges, they might not be equivalent senior engineers.

Or maybe the skills that matter are different now, and what looks like a gap is actually a transition. Maybe auditing AI-generated code develops judgment that manual code writing used to develop. I genuinely don’t know, but I’m excited to keep exploring it and learning how to manage it better.

What I do know is that most organizations aren’t asking the question openly and explicitly. They’re measuring the output and assuming the learning follows. That assumption will need proven out before the pipeline becomes a talent trickle.